- On Student Success

- Posts

- This Week in Student Success

This Week in Student Success

Looking beneath the surface

Was this forwarded to you by a friend? Sign up for the On Student Success newsletter and get your own copy of the news that matters sent to your inbox every week.

I’ve spent the past several days in the middle west.

Jon Magliola

In addition to family obligations I have been lucky to spend some time with folks from the University of Iowa as well as other readers and colleagues working in higher education.

This week I found myself thinking about how often the most important things in higher education sit below the surface. A new AI tool turns out to be a question about knowledge. A new initiative turns out to be a question about organizational capacity. A dashboard turns out to be a question about whether anyone is actually prepared to act. Looking beneath the surface, in other words, often tells us far more than looking at the headline.

AI and the Problem of Knowledge

A thoughtful post from Jeppe Stricker argues that generative AI poses not just a technological or pedagogical challenge, but an epistemological one. It forces institutions to confront questions about what counts as knowledge, how claims are validated, and who gets to judge reliability.

At first that sounds odd. After all, generative AI does not “know” things in any human sense. But if epistemology is the study of how knowledge is created and validated, then AI clearly has one—and it is a troubling one. It can produce language that sounds authoritative while detaching fluency from truth, confidence from accountability, and form from content.

As Stricker writes:

Generative AI does something philosophically consequential to information. It decouples form from content, fluency from accuracy, and confidence from accountability. It also produces prose that reads as authoritative regardless of whether it is true or meaningful, and it does so without an author, without methodology, without a review process - indeed without any of the institutional mechanisms that academic culture has developed over centuries to make knowledge claims susceptible to evaluation by peers. [snip]

Questions that surround the quality and purpose of information are not new. They are among the oldest questions in epistemology - questions about what knowledge is and how it is brought to life. What is new is the scale and speed at which generative AI makes these questions urgent for ordinary academic work. Ancient philosophical questions now have immediate practical consequences.

Stricker’s point is that institutions tend to respond to AI through their usual bureaucratic reflexes. IT asks about licensing and security. Teaching centers focus on classroom integration. Individual faculty experiment on their own. All of those questions matter. But none of them addresses the deeper epistemological issue.

Yet, the structural response most institutions default to - assigning generative AI to whichever department has the most bandwidth or the loudest voice - produces characteristic and predictable problems. When AI sits with IT, the questions that get asked are the ones IT is trained to ask: licensing, security, access control. Legitimate concerns, but definitionally upstream of the harder questions about learning and knowledge. When AI sits with teaching and learning consultants, the focus shifts to pedagogical integration - also legitimate, but insufficient without an epistemological foundation that can anchor what responsible use actually means. When AI sits with individual faculty, innovation accumulates in pockets while institutional learning accumulates nowhere. [snip]

What these failure modes share is not incompetence, but instead a structural problem: each department optimizes for the questions it knows how to ask, and remains blind to the questions it doesn’t.

If the problem is epistemological, the people best equipped to help may not be technologists or subject-matter experts at all. One group institutions often overlook in this conversation is librarians. They are professionals trained to deal with questions about the sources, provenance, and reliability of knowledge in practical ways, and they are often well positioned to work across institutional silos.

The growing role of AI therefore represents an opportunity for libraries to play a larger role across the institution. But it will also require some rethinking of traditional service models. For this to work well, libraries will need to approach the problem primarily in a spirit of service and access rather than gatekeeping. Many already do—and they are likely to be the institutions that navigate these epistemological challenges most successfully.

Why Pilots Stall

Looking beneath the surface, the success or failure of new initiatives often has surprisingly little to do with the quality of the idea itself. A thought-provoking post in the Super Cool & Hyper Critical newsletter offers an explanation.

The post argues that initiatives succeed only when three things line up: leadership conviction, economic logic, and organizational architecture. Without all three, even good ideas stall.

Most initiatives do not fail because the idea was weak. They fail because the organization was not structurally ready to carry them. The top of the house was unconvinced. The economics did not justify the enthusiasm. Or the system itself could not absorb the change without destabilizing something more valuable.

We like to believe that good ideas win. It is an appealing story. It flatters our sense of rationality. In mature organizations, that is rarely how it works.

Organizations are structured power systems. They are constrained by capital allocation discipline, embedded incentives, legacy architecture, regulatory exposure, institutional memory, and informal influence networks that have hardened over years.

Within that environment, ideas do not advance because they are compelling. They advance when conviction, economic coherence, and structural feasibility align. Initiatives survive because power backs them. And power backs them when those three forces move together. If you want an insanely great outcome, you need all three. Not two. Not one.

When we start a new initiative we often mistakenly think that what we are asking for is either money or time. In reality we are asking for a lot more.

Asking for executive attention bandwidth in an environment where that bandwidth is already scarce.

Consuming political capital that might be required elsewhere.

Introducing disruption tolerance into an organization that may be optimized for stability.

Accepting temporary volatility in performance metrics that boards and markets monitor closely.

Destabilizing cultural equilibrium.

This argument will sound familiar to anyone who has watched higher education launch new initiatives in areas such as student success, advising reform, or AI adoption. Institutions often begin with enthusiasm and pilot projects but underestimate the organizational redesign required to support them—changes in incentives, workflows, staffing, governance, and data systems. In other words, initiatives fail not because the goal is wrong, but because the institution was never structurally prepared to execute it.

When launching pilots in edtech or student success—and certainly before launching full initiatives—it is not enough to ask whether there is interest, funding, and executive sponsorship. Institutions also need to ask whether the pilot can actually operate within existing workflows, governance structures, and reporting systems. And they need to recognize that real change almost always disrupts current practices—and sometimes the metrics used to measure success.

Data as the New Oil

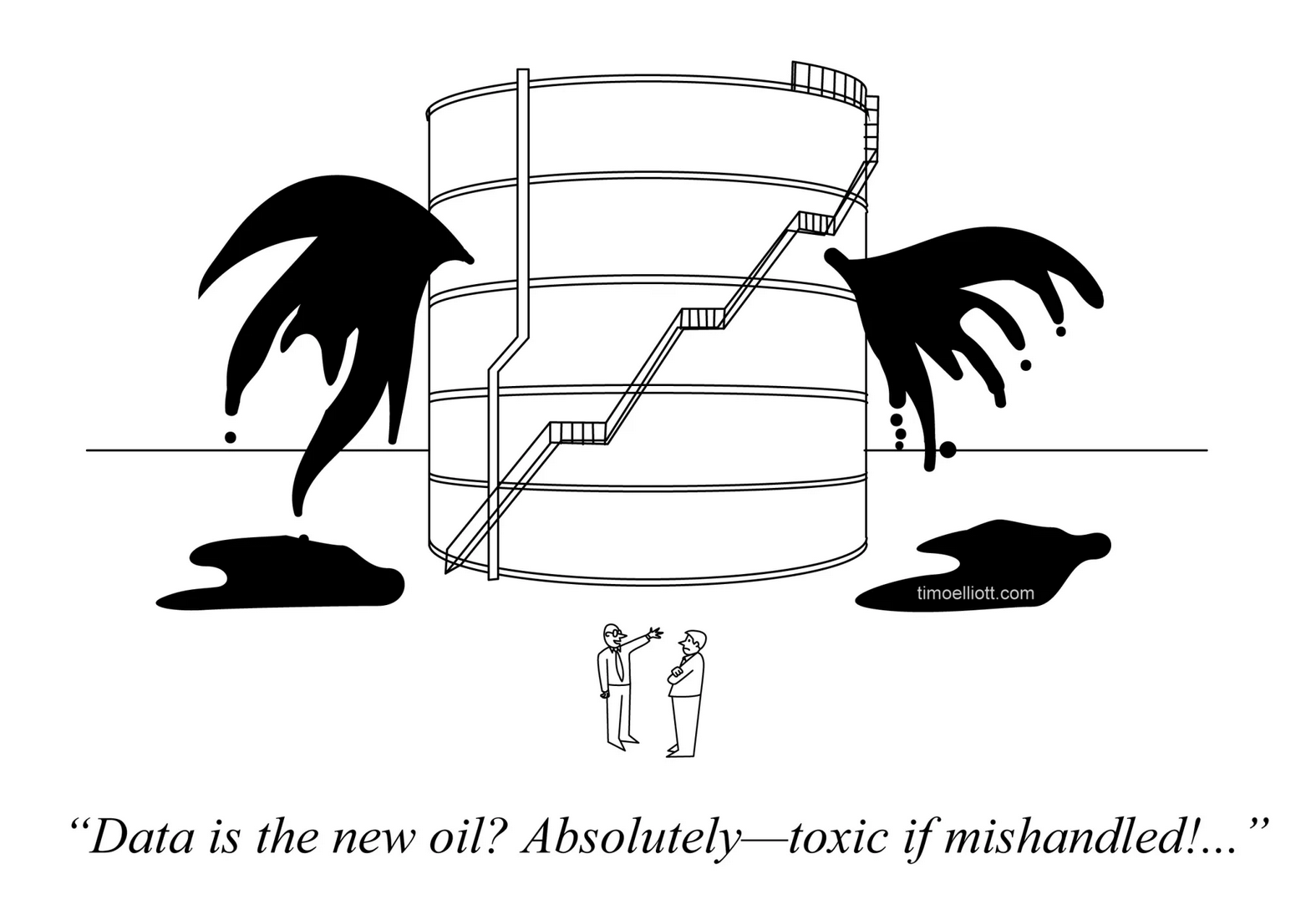

That in turn reminded me of another one of my least favorite higher education tropes: “data is the new oil” and its cousin, “data is the new soil.” I have never found either metaphor particularly helpful. But I do like this cartoon.

Dashboards Are Not Decisions

A recent post offers a sharp critique of how many data teams operate. Instead of focusing on the decisions organizations need to make, they often function as internal service desks—processing tickets, building dashboards, and measuring success by delivery speed and stakeholder satisfaction.

As the author puts it, many data units fall into what he calls the “service model trap.”

Most data teams run a service-desk model that optimises for ticket velocity and stakeholder happiness—not for business impact.

The author argues that this happens for three related reasons.

Solution-first thinking: Data teams ask what they can build rather than what decision needs to be made.

Technical purity over pragmatism: They optimize for rigor and elegance rather than usable action.

Insulation from consequences: They are rarely accountable for whether their work actually changes behavior or outcomes.

The author’s argument is that the service model eventually breaks down when the business itself changes. In higher education, though, the problem is not primarily that the “business” changes. It is that data service models often deliver reports and dashboards without delivering changed practice or improved student outcomes.

His proposed solution is for data teams to shift from a service model to something closer to a product model, measuring success by decision implementation and impact. In principle I agree with the direction of this shift. In practice, however, the challenges of doing this in higher education are enormous.

Student success work in higher education simply does not operate like a business product environment. Decision authority is diffuse, many of the most important changes involve ongoing practices rather than discrete decisions, and analytics teams are often far from the advising, tutoring, classroom, and financial aid workflows where change actually has to happen. Universities also still require a large amount of operational data work—accreditation reporting, state reporting, enrollment analysis—which means a purely “product” model is unrealistic.

A more realistic framing for higher education might be analytics as embedded change support, not product development. That means partnering with operational units, supporting implementation, measuring behavioral change, and feeding those lessons back into the analytics model. In that framing the role of data is not only to inform decisions but to help institutions change practices.

Musical Coda

Having spent the past few days in the Midwest, I have been thinking about Susan Werner’s Barbed Wire Boys, her classic song about Midwesterners and the things left unsaid. It captures something essential. That line about “the unsung song” feels like an appropriate metaphor for this week’s theme—the deeper forces that often remain unspoken in our institutions.

Well I come from the rural Midwest

It's the land I love more than all the rest

It's the place I know and understand

Like a false-front building, like the back of my hand

And the men I knew when I was coming up

Were sober as coffee in a Styrofoam cup

There were Earls and Rays, Harlans and Roys

They were full-grown men, they were barbed wire boys

They raised grain and cattle on the treeless fields

Sat at thе head of the table and prayеd before meals

Prayed an Our Father and that was enough

Pray more than that and you couldn't stay tough

Tough as the busted thumbnails on the weathered hands

They worked the gold plate off their wedding bands

And they never complained, no they never made noise

And they never left home, these barbed wire boys

'Cause their wildest dreams were all fenced in

By the weight of family, by the feeling of sin

That'll prick your skin at the slightest touch

If you reach too far, if you feel too much

So their deepest hopes never were expressed

Just beat like bird's wings in the cage of their chest

All the restless longings, all the secret joys

That never were set free in the barbed wire boys

And now one by one they're departing this earth

And it's clear to me now 'xactly what they're worth

Oh they were just like Atlas holding up the sky

You never heard him speak, you never saw him cry

But where do the tears go that you never shed?

Where do the words go that you never said?

Well there's a blink of the eye, there's a catch in the voice

That is the unsung song of the barbed wire boys

And while we’re on the subject of things that go unsaid, I will confess to one small unspoken hope: that if you enjoy this newsletter you might quietly forward it to a friend or colleague. I promise not to mention it again for at least another week.

Thanks for being a subscriber.